UX Studies Support

We have knowledge and experience in conducting and designing usability studies.

Mobile UX Studies

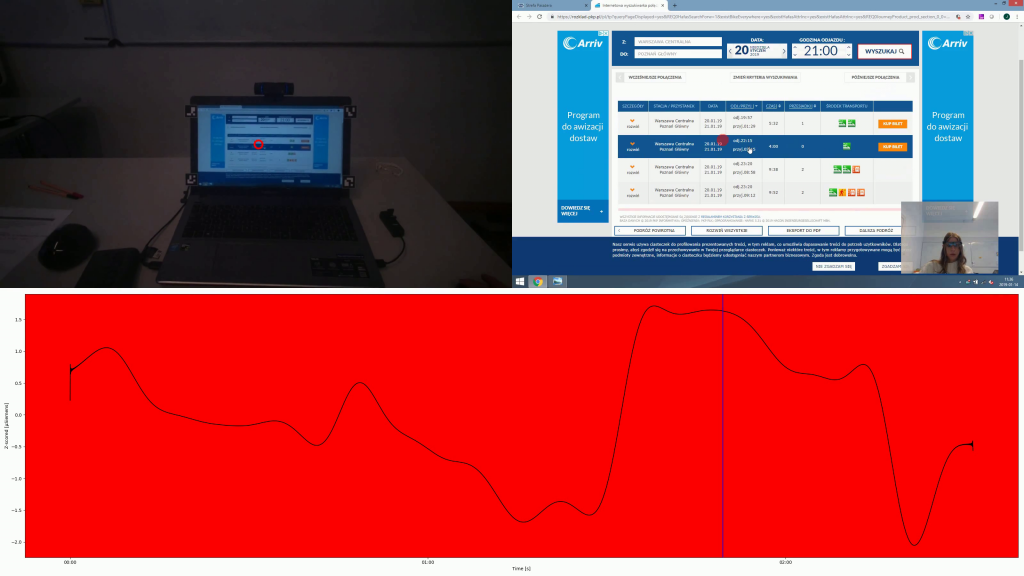

For the purpose of conducting usability studies of applications on mobile devices, we have prepared a proprietary solution that allows for both qualitative and quantitative analysis.

With the use of an eye-tracker in the form of glasses and an electrodermal response (EDA; also known as „galvanic skin response”, GSR) sensor, we can examine the use of applications in conditions similar to natural ones. We simultaneously record the point of gaze focus and the galvanic skin response, which allows us to determine the emotional arousal of the subject during command execution..

The result of the study is material showing the focus point of the subject’s gaze at a given moment, plotted on a so-called screencast of the smartphone screen, synchronized with the EDA signal, presented as a z-score, which is an easy-to-analyze graph that tells the strength of the galvanic skin response for a given person throughout the experiment.

Unlike other solutions, we do not place the phone on a stand, allowing free use of the device. We can carry out the survey in any location: a public place, a commercial establishment or a selected facility visited by customers.

Psychophysiology

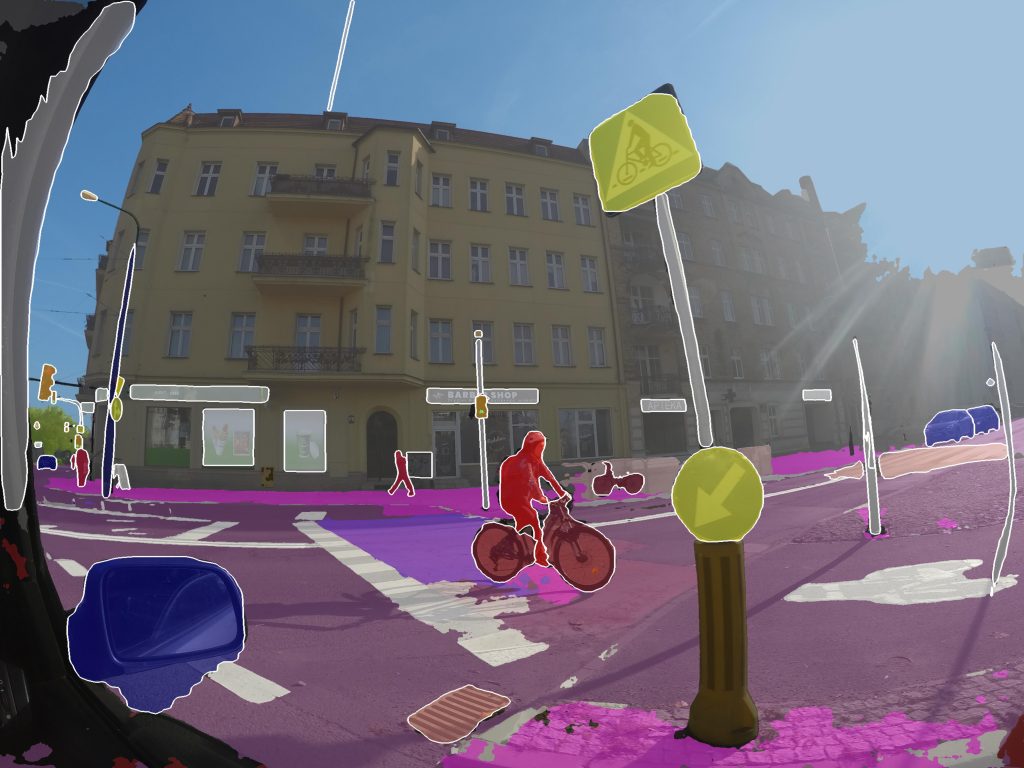

Together with scientific partners we conduct research in which, in addition to using data recording from biosensors (such as eye-tracker, EDA, EEG, etc.), we work on proprietary software for analysis of research results using image processing.

An example of such software is a system for automating eye-tracking data mapping that allows for quantitative analysis of research conducted in natural environments.

Client Behaviour Analysis

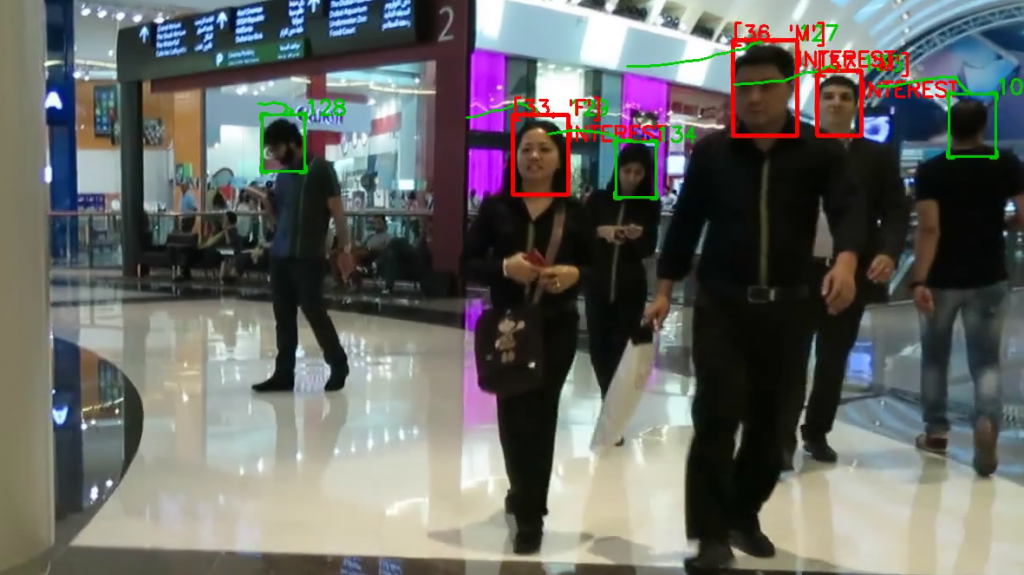

We have experience in automatic processing of video data using „classical” image processing methods as well as neural networks for detection and tracking of moving objects.

Thanks to advanced predictive models of neural networks, we can profile customers by their demographic structure (i.e., gender and age) and verify their interest in a selected object (e.g., a commercial stand).

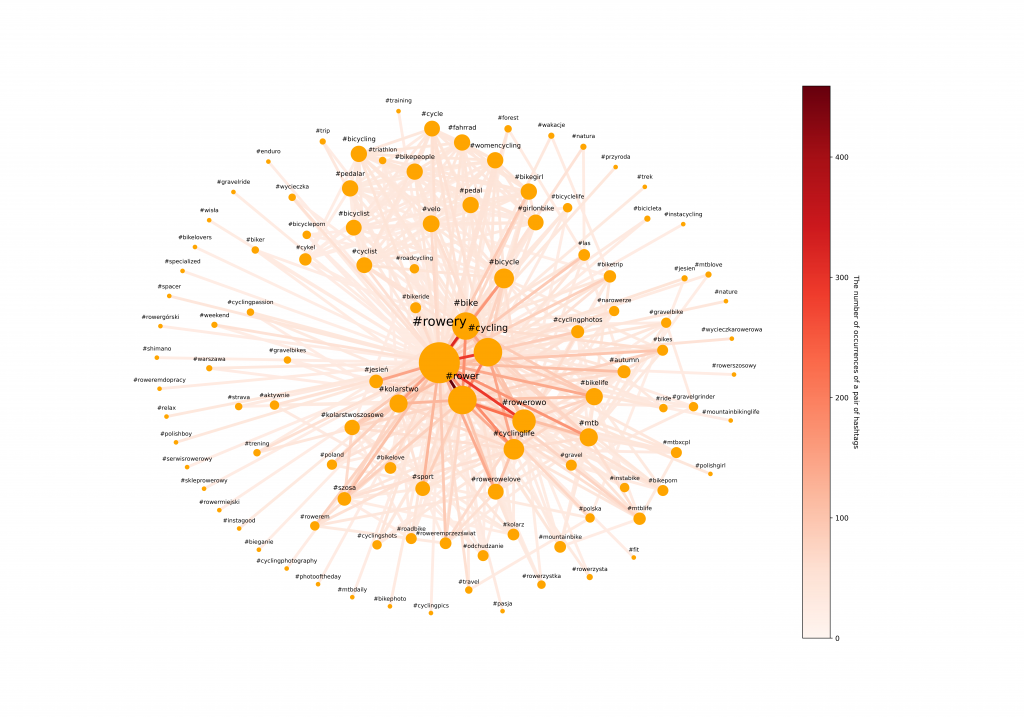

Social Networks Analysis

Based on modern machine learning methods, such as deep neural networks, we analyze the content of photos posted in social networks. We confront the information gathered through predictive models with the content of post descriptions and hashtags.

Masks Detection

Using neural networks, we are able to perform detection of people who have a mask on their face – both if the data are sets of photos and video recordings. As a result, it allows us to statistically analyze what proportion of visitors have masks at a given time, when there are characteristic changes in the trend, etc.

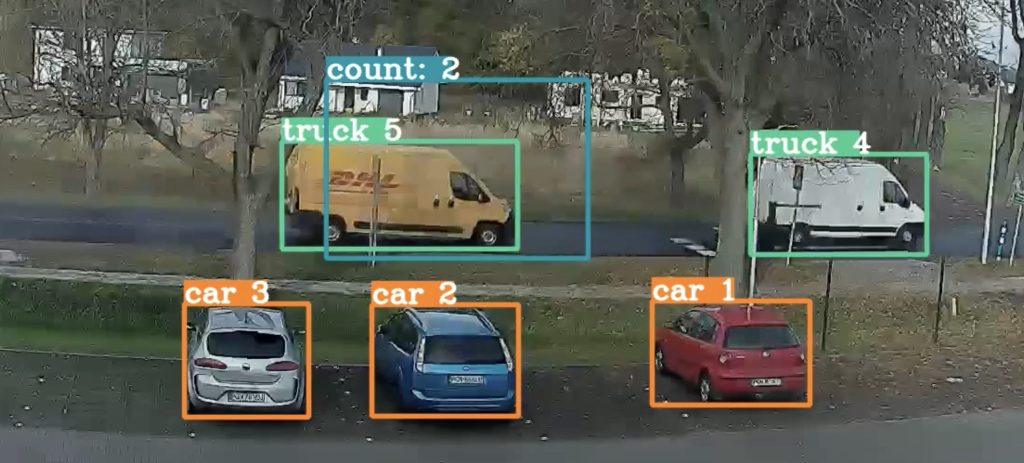

Object detection

Using neural networks, we are able to perform detection of various kind of objects working with pictures, movies and even satellite images.

PosEmo – AI engagement and emotional valence

PosEmo is an attempt to understand better what people think and how they feel – implemented on a bigger scale than ever before, with the state-of-the-art artificial intelligence methods. The solution is based on technology that is already available to the people – a simple web camera. It allows you to track interest and assess attitude of the person in front of the camera in real time!

See: https://posemo.io